|

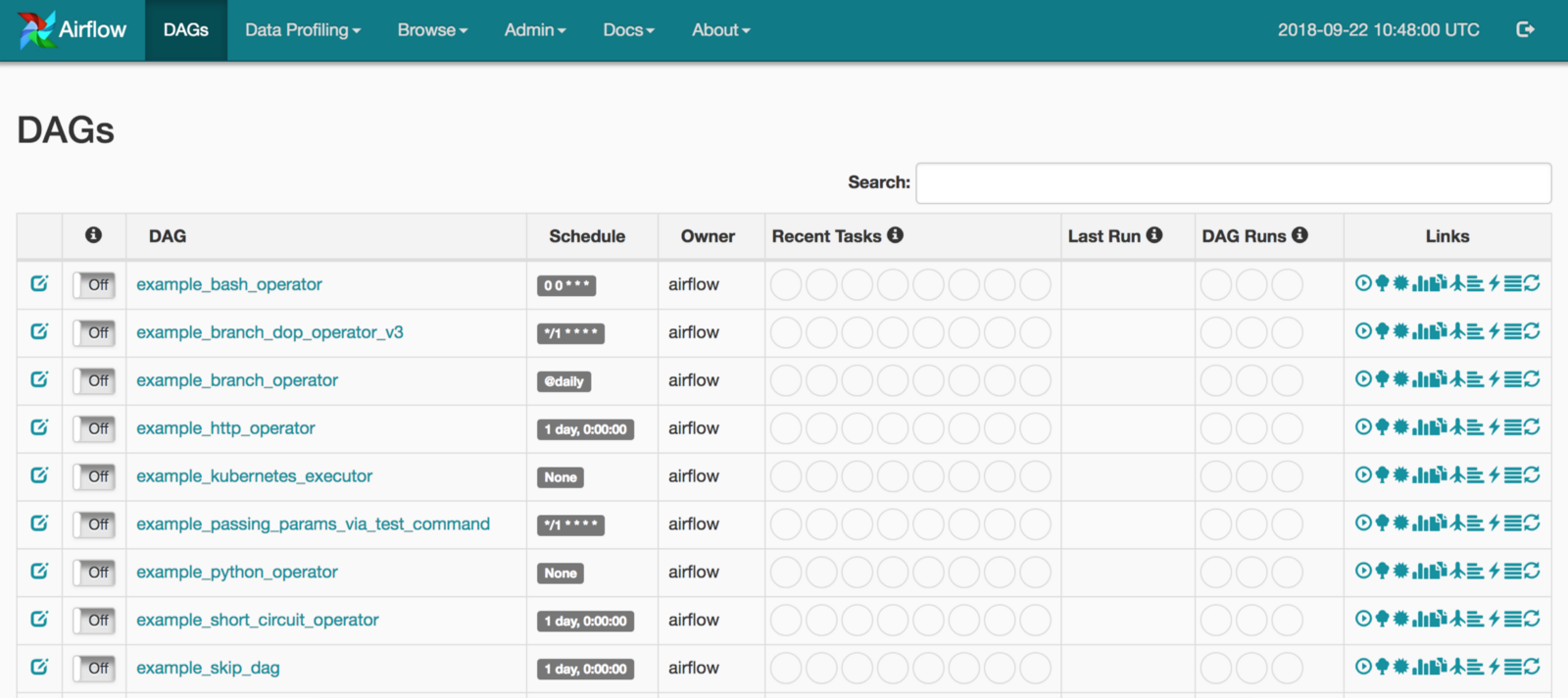

Please visit the following URL (replace with the EIP of the ECS) to access the Airflow web console: The default account has the login airflow and the password airflow: Comment on the part related to postgres:Įxecute the following command to initialize Airflow docker: docker-compose up airflow-initĮxecute the following command to start Airflow: docker-compose up Use the connection string of rds_pg_url_airflow_database in Step 1. envĮdit the downloaded docker-compose.yaml file to set the backend database as the RDS PostgreSQL: cd ~/airflow Please log on to ECS with ECS EIP: ssh and execute the script setup.sh via the following commands to setup Airflow on ECS: cd ~Įcho -e "AIRFLOW_UID=$(id -u)\nAIRFLOW_GID=0" >.

Deploy and setup Airflow on ECS with RDS PostgreSQL The database port for RDS PostgreSQL is 1921 by default. rds_pg_url_airflow_demo_database: The connection URL of the demo database using Airflow.rds_pg_url_airflow_database: The connection URL of the backend database for Airflow.( In this tutorial, we use RDS PostgreSQL as backend database of Airflow and another RDS PostgreSQL as the demo database showing the data migration via Airflow task, so ECS and 2 RDS PostgreSQL instances are included in the Terraform script.) Please specify the necessary information and region to deploy:Īfter the Terraform script execution is finished, the ECS and RDS PostgreSQL instances information are listed below: Run the terraform script to initialize the resources. Use Terraform to provision ECS and database on Alibaba Cloud Deploy and run data migration task in Airflow Prepare the source and target database for Airflow data migration task demo Step 1: Use Terraform to provision ECS and databases on Alibaba Cloud.In this tutorial, we will show the case of using RDS PostgreSQL high availability edition to replace the single node PostgreSQL built-in Docker edition for more stable production purposes.

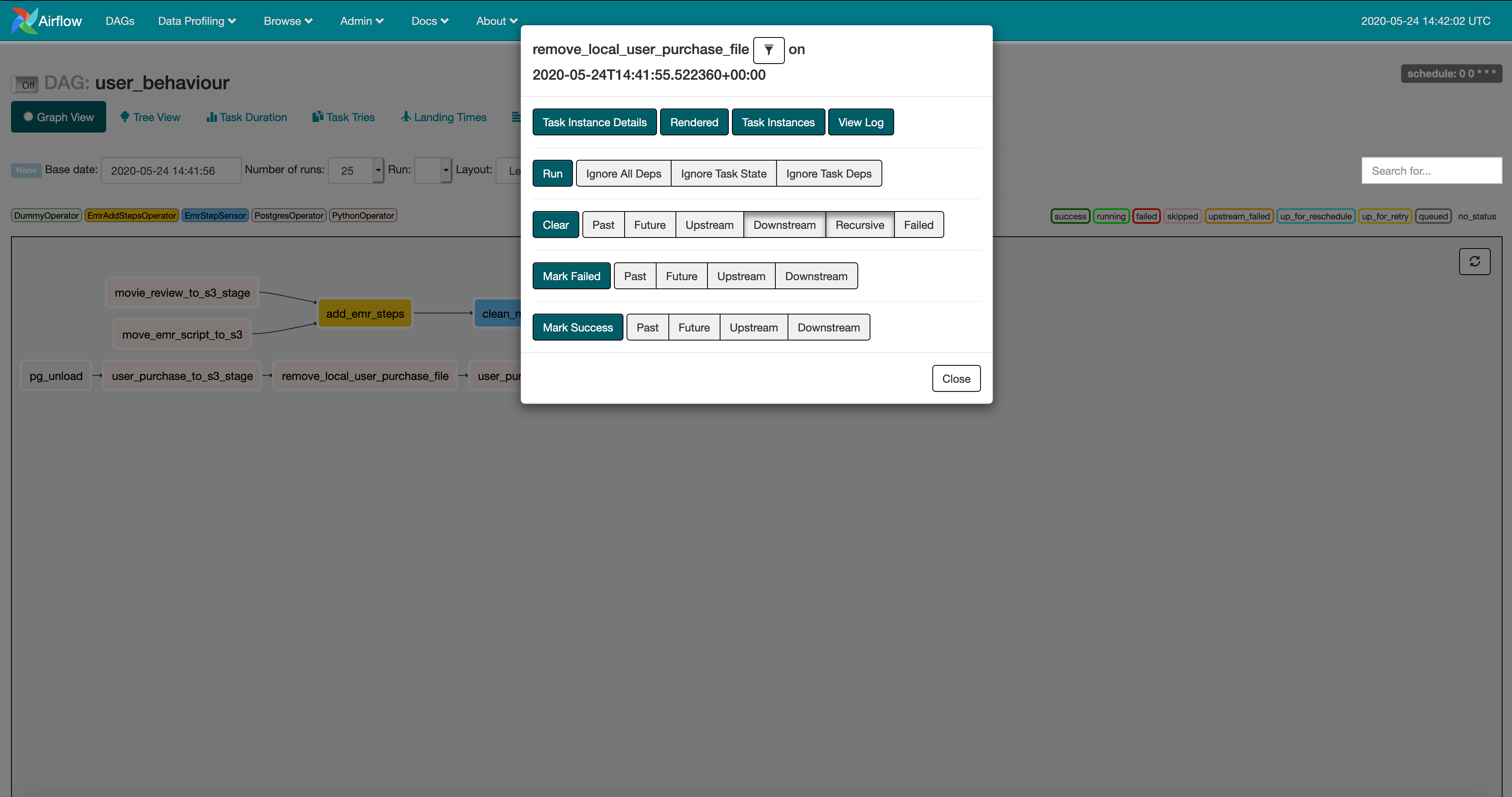

You can use one of the following databases on Alibaba Cloud: We will show the steps of deployment working with Alibaba Cloud Database to enhance the database's high availability behind the Apache Airflow.Īirflow supports PostgreSQL and MySQL. If you are experimenting and learning Airflow, you can stick with the default SQLite option or single node PostgreSQL built-in Docker edition. OverviewĪpache Airflow is a platform created by the community to author, schedule, and monitor workflows programmatically.Īirflow requires a database. Please refer to this link for more tutorials related to Alibaba Cloud Database. You can access the tutorial artifact, including the deployment script (Terraform), related source code, sample data, and instruction guidance from the Github project. We also show a simple data migration task deployed and run in Airflow to migrate data between two databases. This stack also creates an EMR Studio environment that can be used to build and deploy data notebooks.ĭisclaimer: I work for AWS on the EMR team and built this stack for my various demos and it is not intended for production use-cases.This is a tutorial on how to run the open-source project Apache Airflow on Alibaba Cloud with ApsaraDB (Alibaba Cloud Database). I wanted to write a post about how I built my own Apache Spark environment on AWS using Amazon EMR, Amazon EKS, and the AWS Cloud Development Kit (CDK). Overview OpenAQ maintains a publicly accessible dataset of various air quality metrics that’s updated every half hour. With Amazon EMR on EKS, you can now customize and package your own Apache Spark dependencies and I use that functionality for this post. 8 min Build your own Air Quality Monitor with OpenAQ and EMR on EKSįire season is closely approaching and as somebody that spent two weeks last year hunkered down inside with my browser glued to various air quality sites, I wanted to show how to use data from OpenAQ to build your own air quality analysis.Overview Before you get started, it’s good to have an understanding of the different components of an Airflow task. So here’s a guide on how I made a new operator in the AWS provider package. And weighing in at over half a million lines of code, Airflow is a pretty complex project to wade into. While I’ve been a consumer of Airflow over the years, I’ve never contributed directly to the project.

Recently, I had the opportunity to add a new EMR on EKS plugin to Apache Airflow. Building and Testing a new Apache Airflow Plugin

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed